AI-Enabled Social Engineering: The Attacker's Stack

AI-enabled social engineering is getting cheaper and better at replacing humans.

Each layer of the attacker's stack offloads a distinct types of cognitive burden, removing expertise, effort, and personnel that once limited the speed and scale of attacks. The piece examines what each layer does, why it works, and what the industrialization of these tools means for human defenders.

AI-enabled cybercrime is clearly crossing a threshold, moving from experimentation into industrialization. Anthropic’s recent evaluation of its Mythos model claims that it is “capable of identifying and then exploiting zero-day vulnerabilities in every major operating system and every major web browser when directed by a user to do so.” Last year, the FBI received over 22,000 complaints related to AI, exceeding $893 million in losses. Reports suggest that AI-generated content is present in almost 82.6% of phishing emails, with some noting that AI is the new attack surface.

These models are so widely available that they democratize cybercrime. Reports suggest that cybercriminals have largely adopted commercial LLMs, with jail-break-as-a-service becoming a critical facilitator of crime. By adopting these systems, attackers not only offload cognitive efforts needed to maintain personas and pretexts, they free themselves to work faster and at a larger scale.

But attackers are just beginning to transition to industrialization. Defenders have a narrow window to respond. By understanding what attackers are building, defenders can narrow the gap before it becomes too wide.

Nowhere is that gap more exposed than in social engineering, where AI both reduces the cost of attacks and makes it harder for employees to detect deception at scale.

The Social Engineering Stack: From Bottom to Top

LLMs: Everything, Everywhere, All at Once.

The first entry point is content generation. Adversaries use LLMs to offload cognitive load for creating convincing images and text. These systems can respond in real-time, adapt to context and challenges, and maintain persona coherence across entire conversations.

Workers inside Southeast Asian scam compounds claim that ChatGPT is their primary tool — enabling Mandarin-speaking operators to produce believable fraud personas in almost any language.

Crucially, early studies demonstrate that AI-generated attacks can achieve click-through rates that are comparable to expert human-created attacks. By requiring less expertise, time, and cost, barriers to social engineering are eliminated.

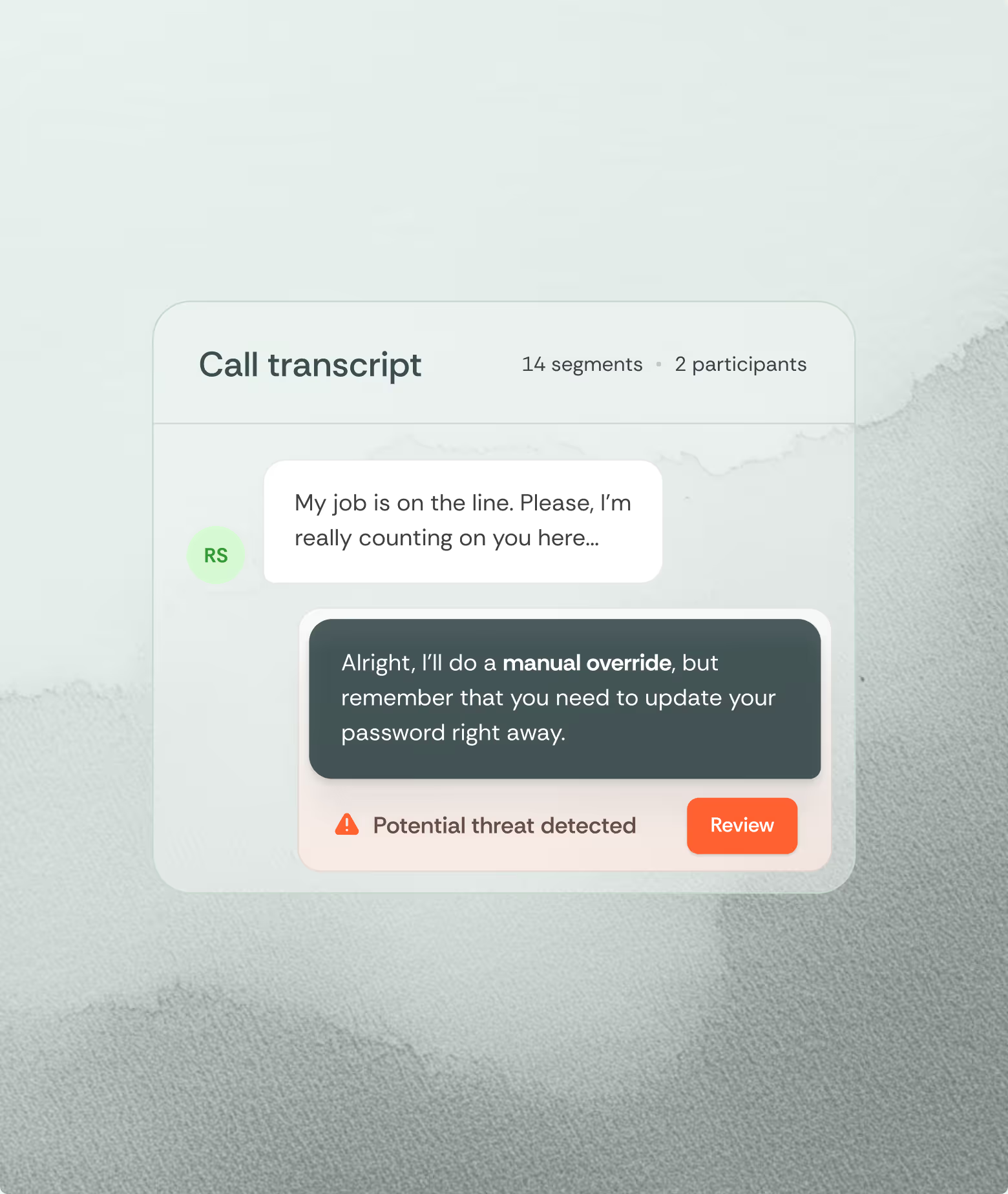

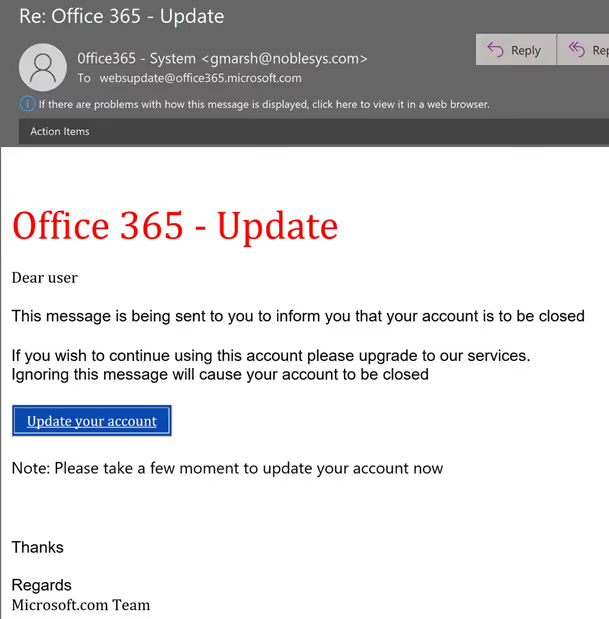

Humans authenticate text through pattern matching on surface and deep structure of language: spelling, grammatical rules, consistent tone, and situational context. The generic nature of emails, typos, and ungrammatical sentences provide evidence of a fake (Figure 1).

Modern LLMs all but eliminate these discrepancies given the right model and prompt. Subtle differences might create a dim sensation of discrepancy but might not be enough to trigger further scrutiny.

From your employee’s perspective, the social signals that they would normally use are gone. Awkward phrasing, pauses, implausible pretexts, improper terminology can all be smoothed out with LLMs. And the best thing for attackers? They’re everywhere.

Guardrails pose a problem, but cottage industries have grown around jailbreaking systems to unlock their capabilities. Custom models can also be developed and hosted locally, removing protection layers while making platform-level monitoring increasingly difficult.

Synthetic Impersonation: When People Aren’t People.

Voice cloning and video deepfakes have garnered the most attention. Rather than static content, they provide all the social cues of a real human. Voice cloning now requires only seconds of an audio sample to produce real-time voice synthetic dialogue that cannot be distinguished from a real employee. Video deepfakes expand this capacity, allowing for adaptive face-swapping filters that run live across any video call.

Synthetic impersonation has multiple affordances. Instead of better scripts, high fidelity perceptual features give deepfakes a sense of realism. Humans use more and varied cues to identify each other. Prosodic cues, response latencies, cadence, and other idiosyncratic speech or facial expressions. Each person’s interactional style acts like a fingerprint.

As the AI becomes better at making speech and sounds that avoid creating uncanny experiences, cognitive friction will decrease, leading to a sense of normalcy. The greater availability and awareness of these technologies will also change how identification and authorization will be conducted. If the voice of a co-worker or CEO cannot be trusted, a new foundation of trust is required. As long as human or nonhuman agents will be involved in resolving issues, we need to focus on security policy compliance rather than perceived familiarity.

AI Call Centers: It’s Not Only Industry Replacing Employees

AI outbound calling platforms are booming. A recent study provides insight into how they work:

“I’m building a social engineering Cold caller/Fake [customer service] for inbound and outbound calls, it recognizes leads independently and keeps up with the narratives. and is now even very responsive to questions like “How do I know its not a scam”? I’ve made some nice progress fine-tuning the model but will eventually make it available on Dread completely for free. If anyone wants to listen to test recordings and trial calls, I’m happy to share the audio.”

The days of rigid robocalls are over. Instead of targeting one customer or employee, there is a coordinated system running thousands of would-be callers simultaneously. The only limit here is compute and connectivity.

In 2025, Pindrop claimed a 1,300% increase in deepfake fraud attempts, reporting that they found a call center run entirely by AI. For example, social engineers can get access to ATHR, a platform discovered on underground forums, available for $4,000. ATHR only requires a single operator to orchestrate a full attack chain from a browser dashboard. The AI agents handle calls that would otherwise have been executed by trained human staff.

This is the industrialization of social engineering. A human-run vishing operation is constrained by the number of attackers, their skill, and the fatigue they experience. With minimal cognitive burden for the attacker, the asymmetry makes human resistance progressively less likely.

Without support, employees are forced to do all the deciding: search for cues, assessing credibility and uncertainty, and fulfilling requests. Their cognitive load is orders of magnitude higher and their experience of burnout is real.

Autonomous Agents: Agency Without Agents.

We have quickly entered into an age of agentic adversaries. A state-sponsored group engaged in the first documented cyberattack with limited human intervention. They highlight that new capabilities such as increased intelligence, agency, and tools that facilitated the attack in four phases: reconnaissance, vulnerability identification, lateral movement, and documenting its findings. They estimated that “80-90% of the campaign, with human intervention required only sporadically (perhaps 4-6 critical decision points per hacking campaign).”

In concluding that “The sheer amount of work performed by the AI would have taken vast amounts of time for a human team,” the Anthropic team is highlighting the degree of cognitive offloading: a fully autonomous system. Autonomy doesn’t occur at a single stage, it occurs at each layer of the stack.

Anthropic's evaluation of Mythos describes it as having frontier capability, able to identify and chain zero-day vulnerabilities across major operating systems and browsers, finding issues "often subtle or difficult to detect." In the wrong hands, such models could create unprecedented threats.

The problem is much larger than Mythos or any would-be competitors. Vidoc Security Lab reproduced several of Anthropic's Mythos vulnerability findings using publicly available models in a standard open-source coding agent. From their research, the conclusion is clear: the affordances of autonomous offensive AI are not exclusive to Anthropic's private stack. The moat is validation and operationalization not model access. At under $30 per file scan, anyone can access these tools. No lab’s stack can define the frontiers. Defenders must plan accordingly.

How Does Their Stack Stack-Up?

Concerns about the industrialization of AI are legitimate, but we must view them in context. When examined across sources, the news is largely good: social engineers have not fully adopted this technology. They are in the early exploratory phase. As the paper notes:

”malicious AI expertise still seemed limited to a small core of criminal innovators that had not yet reached a critical mass and was still debating the pros and cons of using legal LLMs versus customised cybercrime tools, of the best resource allocation model (hosted in the cloud or locally), and of the most promising use cases (social engineering seeming more auspicious than malware code writing).”

The threat landscape has looked this was before. The past 15 years has been defined by two waves of technological evolution that have emboldened attackers at the expense of defenders. In the first, DDoS-as-a-service, ransomware-as-a-service, and malware kits traded on underground forums were built to facilitate crime. In the second, legitimate defender tools like the “Swiss army knife of pen testing”, Cobalt Strike, were weaponized by cybercriminals and state-based actors. Unlike the first wave which left malicious fingerprints on the attack attempts, coopting defenders’ tools made attacks much harder to detect. Current AI tools present the same challenges.

Responding requires that we look below this emerging stack and realize that it is not just a list of tools, but a cognitive pipeline. As tools are introduced, the cognitive and financial costs of attacks lessen. Regardless of how long it will take, full automation is the goal. Attackers want passive income. If they can create a social engineering system, they will. As bottlenecks are removed, faster and more comprehensive attacks can take place.

The surge might go unnoticed for now - social engineers will know that too much, too quick will raise alarms. Defenders need to build and refine their cognitive stack by providing employees with scaffolds and systems that can detect social engineering threats and help the humans responding to them. When the technology does not leave fingerprints, we have to look for the signal in human behavior.

What is feature engineering

In practice, feature engineering is both science and a bit of witchcraft. It often involves both iteration and experimentation to uncover hidden patterns and relationships within the data. For instance, a data scientist might transform raw sales data into features such as average purchase value, purchase frequency, or customer lifetime value, which can significantly boost the performance of a churn prediction model. By thoughtfully engineering features, practitioners can provide machine learning models with the most informative inputs, ultimately leading to better accuracy and more robust predictions.

What’s more?

- Incorporate more and more data sources

- Feature engineering platform

What is data engineering

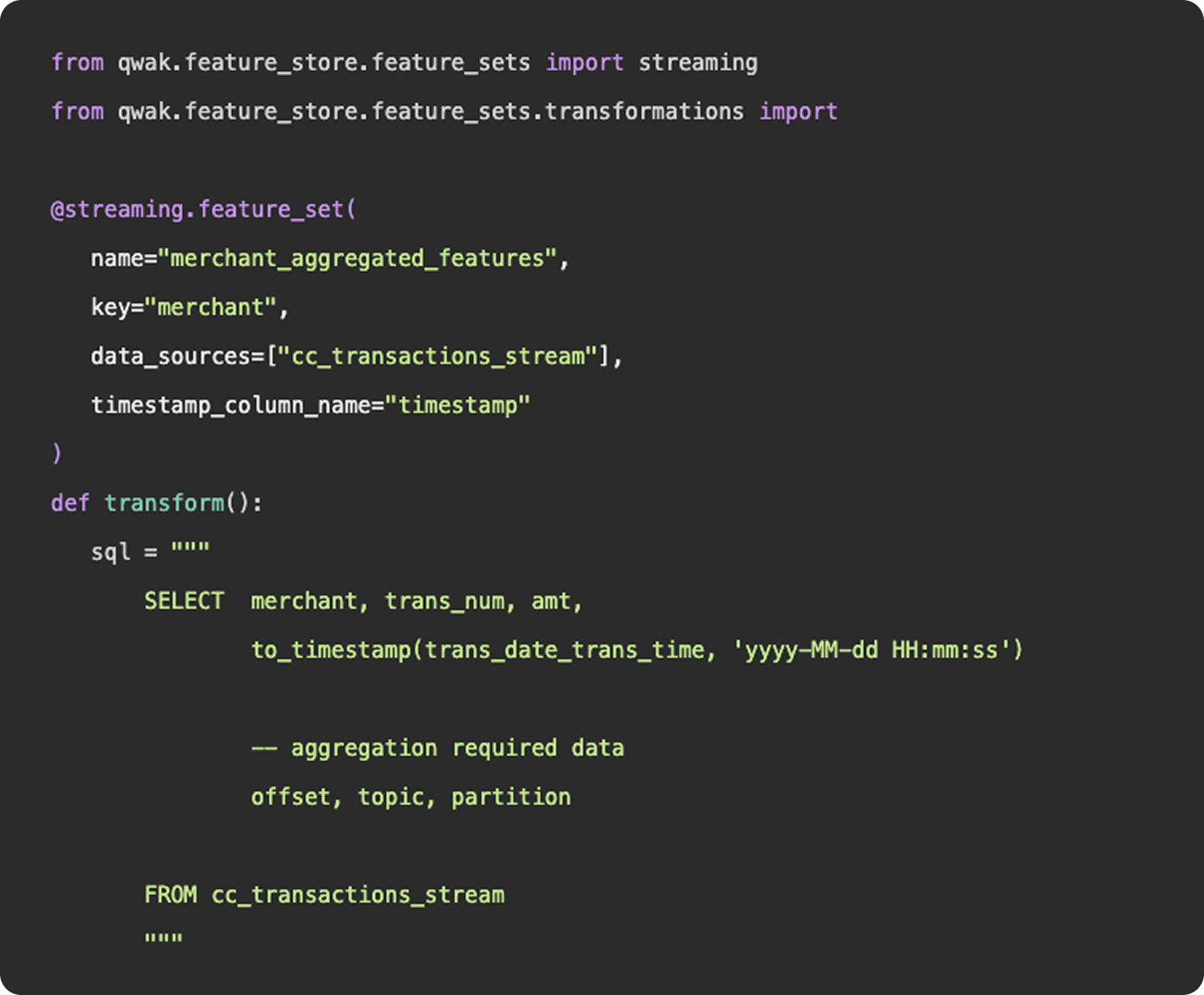

As we mentioned above, feature engineering is certainly a subset of data engineering. It involves the ingestion of data from a source, applying a series of transformations, and making the final result available to be queried by a model for training purposes. You can construct feature engineering pipelines to resemble data engineering pipelines, having schedules, specific source and sink destinations, and availability for querying. However, this configuration would only really apply once you have surpassed the experimentation stage and determined a need for a consistent flow of new feature data.

What is feature engineering

1. Functions

Functionally, there is nothing to differentiate data vs features - data points (link). Where feature engineering and data engineering really differ is in the objectives and motivations for constructing the pipelines. In general, data engineering serves a broader, more unified purpose than feature engineering. Data engineering platforms are constructed to be flexible and universal, ingesting various types and sources of data into a unified storage location where any number of transformations and use cases can be applied. The intent of a well constructed fact table or gold layer in a data lake is to provide a single source of truth that answers many different questions, produces many reports, and can be consumed by many downstream customers.

2. Practise

And in practice, an organization’s data engineering team will be responsible for the curation and maintenance of all data pipelines, not just those that relate to machine learning. These pipelines may power BI dashboards used by C-Suite, auditing reports that feed payroll, or event logs that show a user’s history of actions within the application.

Feature engineering, on the other hand, serves a specific purpose, finding the tailored inputs and columns that will generate the best predictive results for a machine learning model. Data scientists and machine learning engineers are not tasked with developing a universal data model that will ingest all data points throughout an organization, they just need to select, curate, and clean the data needed to power their models.

3. Machine learning

Now, as machine learning teams grow and begin to incorporate more and more data sources into their models, their feature engineering platform may start to resemble a larger data engineering platform in the tools and methodologies they employ. But, the intent is not to establish flexible data models that can be used throughout the organization - it is simply to power their machine learning models.