Social Engineering: It’s a Psychology Problem

Social Engineering Defense Requires Psychology, Not Just Policy

Humanix is tackling cybersecurity's most overlooked attack surface— the human channel — by applying decades of psychological science to detect and disrupt social engineering before employees ever have to.

Social engineering has become one of the most concerning, persistent threats in cybersecurity. It’s also the least understood. In conversations, I hear people talk about ‘human factors’ being the source of error and bias, without understanding the source of these problems. The narrative is simple: Hapless people are duped. When I was invited to join Humanix, I shared their belief that this explanation wasn’t adequate.

Many organizational leaders and SOC analysts I have spoken with over the years display one common response: learned helplessness. Learned helplessness stems from an individual failing to have the ability to perform a task. When they try and fail, they give up.

When people feel disempowered, they often ignore those tasks in favor of focusing on what can be solved. It’s not a character flaw, it’s a simple decision calculus: we need to use our time productively and there are simply things we cannot change. By changing our mental model of risk, we allow vulnerabilities to persist.

Security Debt = Psychological Debt + Technical Debt

Social engineering fits this pattern. Hardware and software are not trivial, but they are discrete systems. Patching zero day vulnerabilities just requires time and ingenuity. Engineers have plenty of those.

Human factors pose a different problem, requiring a different skill set. Social engineers exploit this space.

Leaders of organizations and professional societies are aware of the issue and are interested to hear about it, but they often don’t know how to address the problem. That’s the black box of human factors. People often approach me almost apologetically after I speak at events to find out more. I’m not concerned about these people. I’m concerned about those that don’t ask these questions.

The main issue is familiarity, not intellect. SOC analysts and CISOs consider networks and programs, not people. They don’t know how to define cybersecurity along human dimensions. By ignoring the cognitive (how we think) and social dimensions (how we interact), we create security vulnerabilities. This is the accumulated psychological debt of cybersecurity. We need to pay down this tab.

Unfortunately, people are often seen as the vulnerability. I’ve read descriptions of insider threat that claim, “All employees are potential insider threats.” It’s bad marketing, and it’s troubling.

I wondered about the organizational climate and culture that this mentality creates. Employees cannot feel valued if they are perceived as threats rather than their knowledge and skills as assets. They must be supported.

If we cannot expect a typical employee to understand how a deep neural network, encryption algorithms, and quantum computing work, we cannot expect them to understand a human brain.

A New World with Old Brittle Solutions

For those that assume that humans are the problem, there are only two solutions: better security policies and more effective training. If these are solutions, they aren’t solving the social engineering problem.

Securing Policies: Drafted, Distributed, and Dismissed.

Security policies contain the principles and practices that should help secure an organization. While a great deal of thought might go into their creation, less goes into their interpretation.

Security policies are often presented during onboarding and left to each employee to digest. Updates might be communicated, but rarely contextualized. In organizations I’ve worked for in the past, a random sample of employees will often reveal that few—if any—know where to find these policies, let alone know their content. While there are always some ‘policy nerds’, most employees aren’t. At best, they will default to their own best guesses and ‘common sense’.

Even when employees can and want to access policies, most policies provide only limited details. They provide boilerplate principles and requirements, but these are minimal standards. The reason for this is simple: technologies evolve too fast and are too diverse for a policy to capture all relevant features, organizations are too structurally diverse to include all possible details. And they’d be far too long.

Most security practices are left up to an employee’s judgment. If that judgment is not shaped by extensive training and experience, we can’t expect them to become policy experts.

Training: Tried, Tested, and Tired.

If experience is key, then training would seem to be the answer. It’s not: training doesn’t work.

Employees read through pages of text or watch videos. Some questions follow. They check a box. Even if the content is well structured and contains valuable information, those lessons are quickly lost to the demands of work. To be valuable, we need to use the knowledge and skill that we’re teaching. We must make connections between abstract concepts and daily practices. If we cannot, that information slowly fades away.

Most employees are not exposed to social engineering attempts. Even for those that are, they don’t receive feedback about what factors made the deceitful attempt successful.

Employees cannot be expected to develop social engineering detection skills. They have limited time and attention, and it is quickly pulled elsewhere. Despite security being intimately bound up in these daily tasks, it requires looking at these tasks from a different direction. They are hired to work in finance, customer service, and marketing, not to catch the ‘bad guys’.

If employees must trust each other to perform tasks, security is asking them to question their interactions. Do I know this person? Should I help out this colleague? Is someone else using my colleague’s email? This creates friction and slows down business operations.

Social engineers are aware of this fact and exploit it.

Social Engineering is an Applied Science

Cybersecurity must recognize that social engineering is an applied science. Through trial-and-error, cybercriminals are testing your defenses. Security requires continuous, strategic defense that can meet and exceed evolving threats. If technologies are continually evolving, we need to look elsewhere.

Emails, calls, SMS, and video conferencing have one thing in common: a human channel. If we can lock down the human channel, we stand a chance at stopping social engineering.

The tools social engineers use are not new. Deception and persuasion work because they exploit trust. Adversaries leverage these vulnerabilities through impersonation and pretexts. They don't need to be complicated.

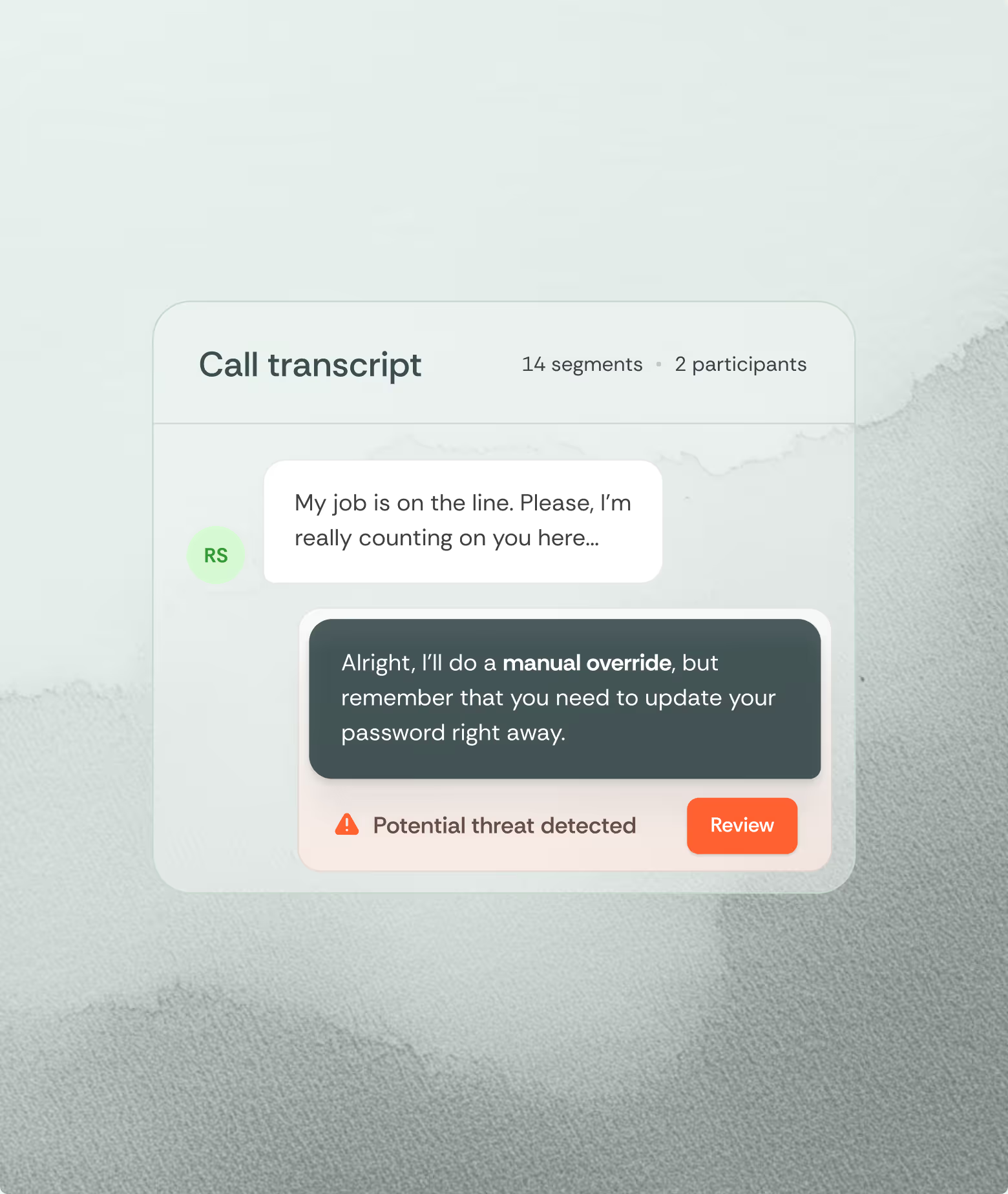

In the recent Clorox-Cognizant incident, Scattered Spider used vishing—a simple call with a request. It’s a good choice. There are fewer social cues that would have helped them detect deception. A person’s appearance, body language, and discrepant tones that would normally suggest dishonesty were all safely hidden behind a phone line. Conversations can occur within seconds, leaving little time to reflect.

Vishing is quick, simple, and effective. As the lawsuit notes: “Cognizant was not duped by an elaborate ploy.” The caller’s identity wasn’t verified. The agent just complied. They wanted to help. That was their job.

Most discussions of vishing and phishing scams invoke ‘authority’ and ‘urgency’. These are effective techniques: they assume a trusted role and compress our time to think and respond. But they represent a small set of a larger toolbox, one that helps humans navigate the world and helps savvy human hackers exploit them.

Cognizant’s response to the lawsuit is equally telling. They defend themselves by claiming that their mandate was “a narrow scope”. This is not an admission of guilt, it’s recognition that employees couldn’t reasonably be expected to recognize social engineering. The courts will decide what is reasonable here, but companies must decide their approach for themselves.

If social engineering is a science that uses deception and persuasion systematically, we need a science-based solution. If employees cannot be expected to recognize the signs, we need to help them. Researchers in psychological science have approached this problem for decades, isolating cues and interactional patterns that work.

Searching for a Solution

To stop social engineering, we must understand these processes and build systems capable of recognizing them in real time. They must be faster than adversaries can exploit them, and faster than employees can be deceived.

Psychological science has been studying the mechanics of deception, persuasion, and compliance for over a century. We know which conversational patterns often signal manipulation. We know how urgency and authority can compress decision-making. We know the subtle interactional cues that define deceptive calls. This knowledge exists. It simply hasn't been operationalized for security in a systematic way.

That's the gap Humanix was built to close.

Rather than asking employees to become amateur psychologists, or asking security teams to become social scientists, Humanix embeds that expertise directly into the human channel — monitoring for the linguistic and conversational signatures of social engineering attempts in real time, intercepting the threat at the point of contact.

The human channel has been left undefended not because the science wasn't there, but because no one has integrated it into the security stack. We’re changing that now.

Interested?

Contact me.

What is feature engineering

In practice, feature engineering is both science and a bit of witchcraft. It often involves both iteration and experimentation to uncover hidden patterns and relationships within the data. For instance, a data scientist might transform raw sales data into features such as average purchase value, purchase frequency, or customer lifetime value, which can significantly boost the performance of a churn prediction model. By thoughtfully engineering features, practitioners can provide machine learning models with the most informative inputs, ultimately leading to better accuracy and more robust predictions.

What’s more?

- Incorporate more and more data sources

- Feature engineering platform

What is data engineering

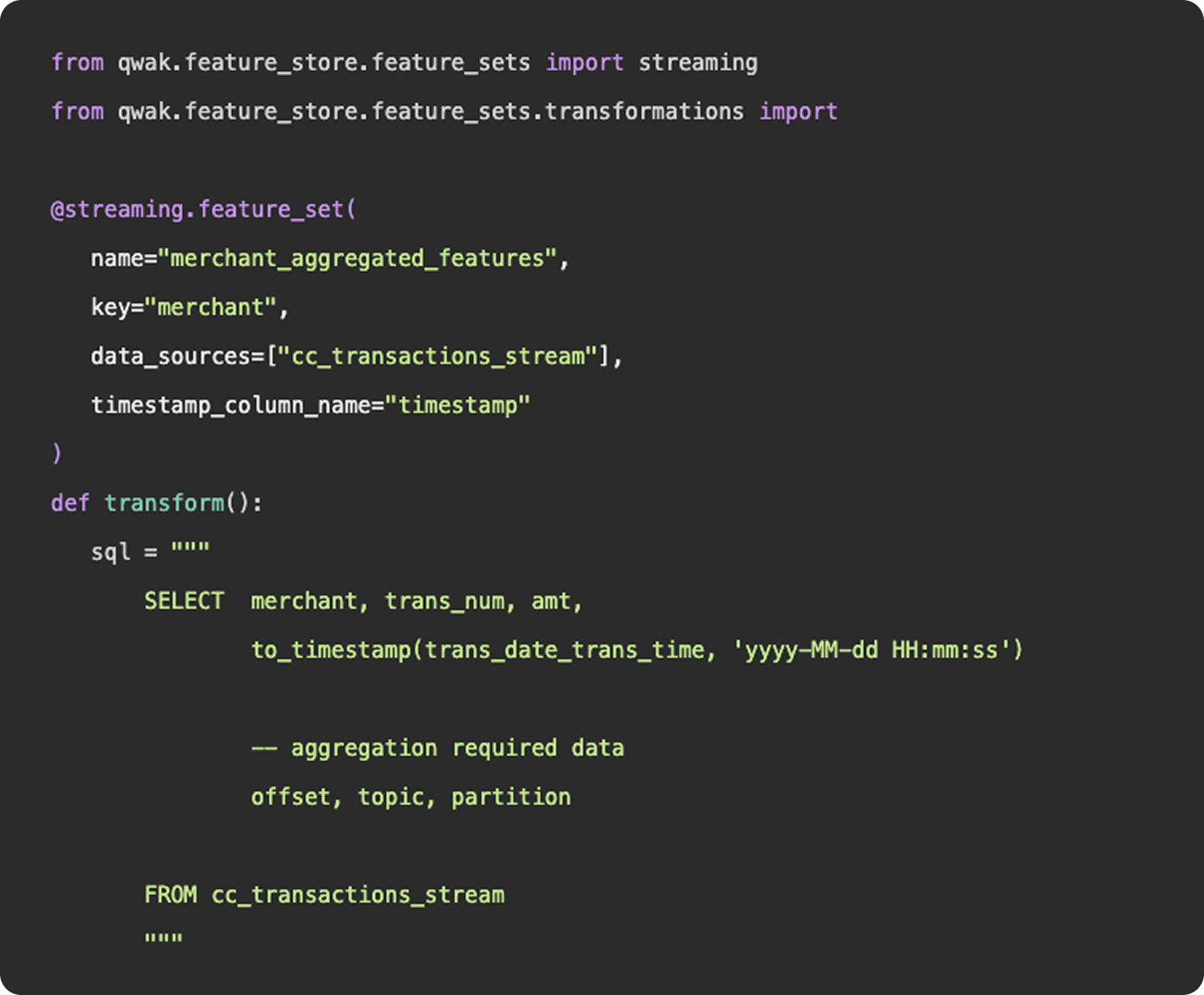

As we mentioned above, feature engineering is certainly a subset of data engineering. It involves the ingestion of data from a source, applying a series of transformations, and making the final result available to be queried by a model for training purposes. You can construct feature engineering pipelines to resemble data engineering pipelines, having schedules, specific source and sink destinations, and availability for querying. However, this configuration would only really apply once you have surpassed the experimentation stage and determined a need for a consistent flow of new feature data.

What is feature engineering

1. Functions

Functionally, there is nothing to differentiate data vs features - data points (link). Where feature engineering and data engineering really differ is in the objectives and motivations for constructing the pipelines. In general, data engineering serves a broader, more unified purpose than feature engineering. Data engineering platforms are constructed to be flexible and universal, ingesting various types and sources of data into a unified storage location where any number of transformations and use cases can be applied. The intent of a well constructed fact table or gold layer in a data lake is to provide a single source of truth that answers many different questions, produces many reports, and can be consumed by many downstream customers.

2. Practise

And in practice, an organization’s data engineering team will be responsible for the curation and maintenance of all data pipelines, not just those that relate to machine learning. These pipelines may power BI dashboards used by C-Suite, auditing reports that feed payroll, or event logs that show a user’s history of actions within the application.

Feature engineering, on the other hand, serves a specific purpose, finding the tailored inputs and columns that will generate the best predictive results for a machine learning model. Data scientists and machine learning engineers are not tasked with developing a universal data model that will ingest all data points throughout an organization, they just need to select, curate, and clean the data needed to power their models.

3. Machine learning

Now, as machine learning teams grow and begin to incorporate more and more data sources into their models, their feature engineering platform may start to resemble a larger data engineering platform in the tools and methodologies they employ. But, the intent is not to establish flexible data models that can be used throughout the organization - it is simply to power their machine learning models.