AiTM at Scale: What the Code of Conduct Campaign Reveals About Detection Architecture

The Attack That Hides in Plain Sight

In sophisticated social engineering campaigns, the attack signal distributes across a sequence of compliance events. Individually, each event is unremarkable; but collectively, they are significant. That pattern makes these campaigns resistant to conventional detection. This articles examine a campaign that affected 35,000 users across 13,000 organizations. It bypassed individual controls, showing the sequential nature of attacks.

Adversaries are racing to exploit the cognitive shortcuts that make social interactions efficient. AI has provided both a new training ground and arena for the development of these attacks. But under these technical layers, we cannot lose sight of the fact that psychology is the driver.

By April 16, 2026, a credential-theft campaign had reached more than 35,000 users in over 13,000 organizations across 26 countries. This provides an unprecedented sample. Microsoft confirmed adversary-in-the-middle (AiTM) token capture at the terminal stage. The campaign passed SPF, DKIM, and DMARC. The malicious links were encapsulated in PDF attachments, bypassing URL scanning on delivery. No flags, no problems. Two CAPTCHA gates stopped sandbox detonation. The final page proxied a live Microsoft authentication session. It was functional, real, and indistinguishable from a legitimate sign-in.

Every automated control in the enterprise stack reviewed the campaign and cleared it. That outcome was not a failure of any individual control. It was the intended result. That is how effective social engineering campaigns work: they ignore the technical, and focus on the human attack surface.

How Adversaries Clear the Cognitive Hurdles: Control Clearance Sequences

What makes this attack visible are the six stages sketched by Microsoft and the sheer scale and success of the attack. Each stage represents both a technical control and a cognitive hurdle: a decision point that must be completed, denied, or bypassed. Clear the cognitive hurdles and you can pass the finish line. Adversaries need to neutralize the deliberation processes of defenders in a sequence, using practices, procedures, and programs that superficially appear similar to institutional scripts.

Hurdle 0: The Subject Line and Hindsight Bias

It is not just LLMs that 'hallucinate'. Human memory is fickle. We often receive only fragmentary information because we failed to pay attention in the moment, we are overwhelmed with other information, or we misinterpreted a cue. When we seem to have missed something, we rationalize it away.

Subject lines like "Reminder: employer opened a non-compliance case log" feed into hindsight bias, the belief that we must have known something all along. In a fast-paced world of vague and continuous bureaucratic workflows, it's easy to miss routine activities.

Past-tense bureaucratic framing ("case log issued") suggests that it's an established fact. Recipients reconstruct a plausible event in their mind from their prior experience and organizational knowledge. Familiar business transactions are so routine and scripted that we know the pace. The adversary fires the first shot, the defender's mind starts the race.

Hurdle 1: The Email Body

After the defender jumps the first hurdle, they land in the email body. Their eyes scan the unknown. Formal titles like "Internal Regulatory COC," "Workforce Communications," and "Team Conduct Report" are just familiar enough to spark a sense of recognition. They look like the kind of communication HR would send.

A header stating the message was "issued through an authorized internal channel" and that links had been "reviewed and approved for secure access" is a preemptive legitimacy claim. It suggests that scrutiny has already been performed upstream. A Paubox HIPAA-compliance banner in the footer invokes a real compliance technology as a trust signal. This is particularly effective in healthcare and financial services environments, which together accounted for 37% of targets.

Hurdle 2: Opening the Attachment

HR and conduct workflows are high-familiarity, low-frequency events. Recipients recognize them immediately - but they're rare events, with no available details. The limited procedural experience employees have is the vulnerability: their processes and security standards need to be inferred. To keep the momentum going, they use general knowledge in memory, not an ops manual.

Recently dated documents appear to be taken from an active workflow. Everyone uses different conventions - that variability creates another vulnerability: close enough is good enough. Now you're faced with a forced choice: ignore the attachment, and you ignore potentially critical information. Whatever cybersecurity training mentioned attachment scams has been swamped by thousands of real emails with real attachments requiring real responses. This everyday email script is activated every minute.

The momentum continues as your commitment escalates. You have made incremental steps from reading the subject line, opening the email, reading the body and attachment.

Hurdles 3-4: Legitimating Barriers

Then comes the Cloudflare CAPTCHA. Its annoyingly recognizable. A seemingly small hurdle that people face after few minutes they spend online. Legitimate services use CAPTCHA. Attackers don’t. That’s the assumption. Such checks confer legitimacy, defining a gateway that stops attackers. A boring, boundary of a protected space on an otherwise unruly web.

Each completed CAPTCHA is a micro-compliance event; each one accelerates thought processes as we become comfortable. The script can now change. Each page looks professional, each reinforces the narrative. There is an imperative task buried in here. No new schema is required. We work on autopilot.

An email address is handed over without thinking — it is the next logical step in this workflow. The mental script now provides its own compliance pressure without asking.

Hurdle 5: The Final Push

"Verification completed successfully" provides a sense of progress, nearing cognitive closure. Every narrative requires an end, and now it's in sight. The threat and anxiety induced in the employee at the first hurdle begins to fade as we approach our goal.

In the race created by the attackers, that otherwise meaningless small reinforcement takes on a larger role. This no longer feels like compliance. It's voluntary. The victim wants to complete this action. They are no longer pushed, they are being pulled. This is necessary for the AiTM page to work: the attackers need the victim to give their credentials over willingly. Anything that seems unfamiliar or out of place will cause the victim to stumble and withdraw from the race.

With no pause, only one hurdle remains.

The Finish Line: AiTM Gold

The final page informed users that their materials had been "securely logged," "time-stamped," and stored in a "centralized compliance tracking system." One action remains: schedule a time. That's the final step to resolve the case, to finish this race.

The Microsoft sign-in page is presented. Now it's time for real credentials. There is no deception; the pre-suasion has already happened and has worked 35,000 times before. The AiTM intercepts the authentication session in real-time. Tokens are captured and no MFA is presented. As the victim crosses the finish line, it's the attacker that gets the gold. The more familiar the employee with the script, the faster the finish.

Sequential Attacks: Trained to Comply

Before we blame the victim in hindsight, look back at the hurdles employees faced. They were trained for this race. Every element is congruent with the mental scripts and schemas of the workplace. An email passes through a filter, the PDF contains no malware, the CAPTCHA domain is plausible, the intermediate pages contained the daily dose of bureaucratic text, and the final page was real Microsoft infrastructure.

This is a meticulously planned pathway that induces compliance. Once authority is established after the first hurdle, commitment builds incrementally, vigilance becomes unnecessary and unavailable. By the time credential capture is imminent, there's no reason to resist. Scheduling an appointment feels necessary.

No single-stage anomaly detector fires because no single stage is anomalous. The attack signal is fragmented and distributed across the chain of compliance events and only visible when viewed as a whole. In high-volume environments bombarded by emails, employees will rely on the technology as validation. As alert fatigue grows and vigilance decays, compliance is inevitable.

Microsoft's recommended mitigations such as ZAP, Safe Links, Safe Attachments, phishing-resistant MFA, Conditional Access. These are controls on artifact delivery and the authentication event. Phishing-resistant MFA stops credential theft at a fake sign-in page. These controls don’t prevent a user from completing genuine authentication steps. This is a vulnerability that exists entirely upstream.

The Real Competition: Interrupting the Race

Social engineers win when we accept their reality and play their competition. Defense requires interrupting the race at specific points - looking at and between the hurdles. What gaps do policies, procedures, and practices leave? By helping your employees fill this space with deliberation before they commit, there is a chance.

Detecting Sequential Attacks

Most security programs measure phishing click rates - real or simulated. Standard SIEM correlate rules and anomaly detection for single events. These metrics and methods only capture a single stage. Sequential social engineering campaigns require detecting extended sequences of behavior: flagging the co-occurrence of PDF-delivered links, CAPTCHA interactions, and credential submission events over a specific time scales. UEBA can be adapted to address this gap but is not currently configured for this purpose. Metrics can focus on sequential completion rates, stages, and features that led to detection, and report time.

Non-technical solutions must exceed training. We know that repeated, simplified exercises fail to provide the rich lessons needed to identify and respond to attacks. Warning people to 'be suspicious' or 'don't click on attachments' is ineffective. We need to trust employees and third-parties for business to operate, and attachments — sadly — will have to be opened. Environments change too often and too quickly for these lessons to provide anything more than a general foundation.

Training does little to help with the cognitive load of everyday activities. We also know that alerts can only compound the problem. Simply holding an important goal does not guarantee that we can keep it in mind when and where we need it. The same reason we lose our keys or forget to buy something at the store creates policy compliance gaps.

Structured Decision-Point Friction

If our auto-pilot doesn’t work, self-regulation provides one answer. Security professionals can develop implementation intentions: specific rules that define situation-based cues. If I see an email promoting urgency, I should verify this through an independent channel. When we provide words and images, we can enhance these cues. Research suggests that this approach improves prospective memory, remembering to perform a desired action in the future. But the efficacy of these methods remains underdeveloped in cybersecurity.

Security awareness programs need be rebuilt around specific actionable cue-response pairs, rather than general principles. Each of these technical hurdles can be aligned with a simple cognitive strategy:

- Hurdle 0: Past-tense and urgency framing must be treated as verification triggers, rather than a prompt to compliance

- Hurdle 1: Roles and organizational units that cannot be verified through an independent internal directory should be treated as unverified, regardless of how familiar they appear.

- Hurdle 2: Organizations must define explicit norms for conduct and HR workflows, including a named out-of-band verification path employees can follow before taking action.

- Hurdles 3-4: CAPTCHAs protect their site operators. They offer the user no protection. Treat every CAPTCHA or challenge as a threshold, and consider what crossing it can lead to.

- Hurdle 5: Requests for an email address, personal information, and passwords should induce the highest threat signal. The finality of the moment must be recognized.

Incorporating AI into Your Security Workflow

Rules, training, communication, and program implementation can improve responses. But they do not address the cognitive limitations of help desk agents and SOC analysts faced with an overwhelming environment. Ask yourself:

- How many rules do we expect an employee to learn?

- How much time do we allow them to stop and think?

- What other activities will occupy their time?

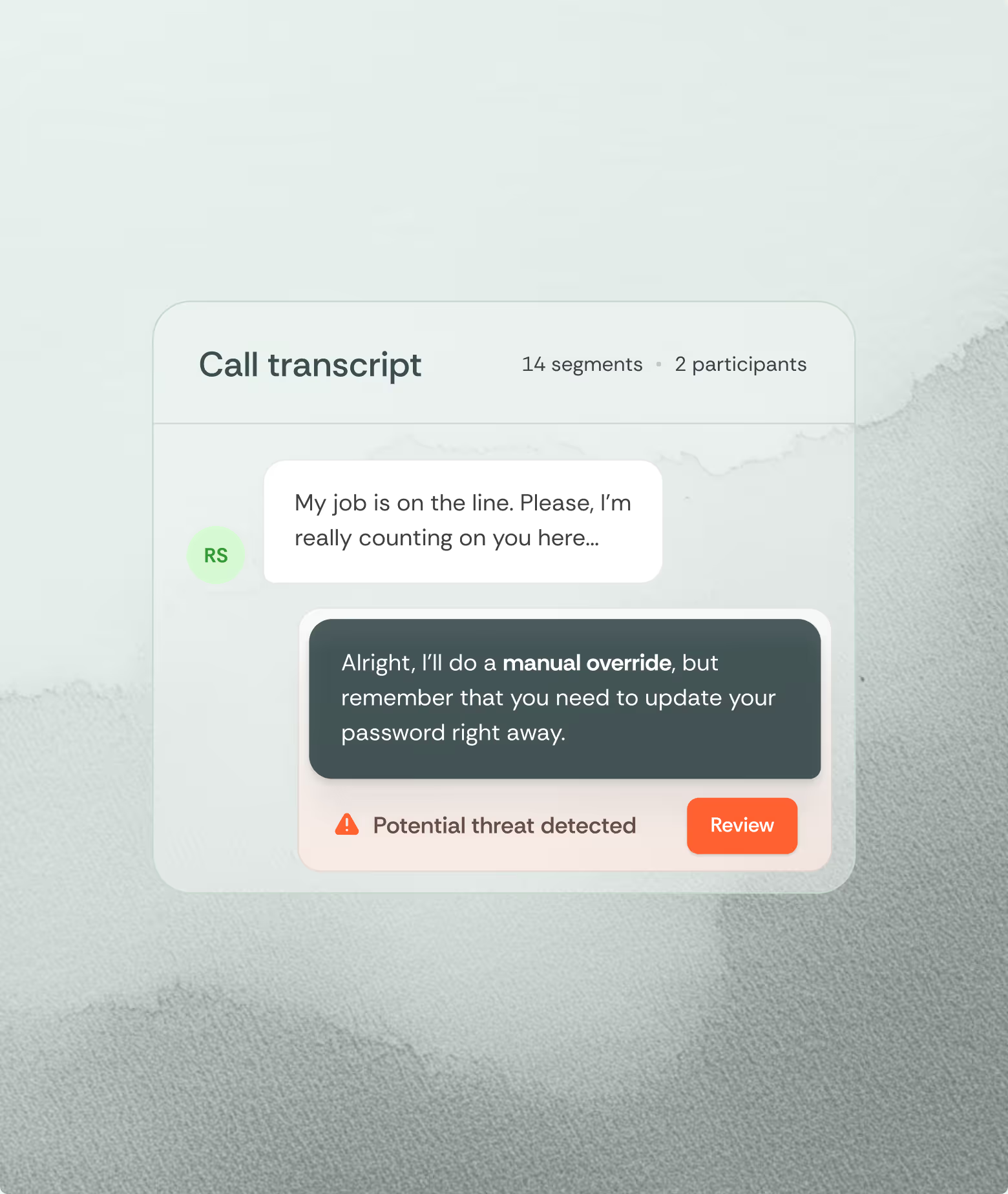

These are the limitations of memory under the typical cognitive load of the workplace of human defenders. They are not failures of training. Boards need to plan for workforce capacity limits of the human attack surface, and adopt technologies responsibly. Emerging AI solutions that harness the capabilities of language models can help identify social engineering by detecting individual cues and sequential attacks that might otherwise be missed.

When emails are received, the language content of the email and attachment can reveal cues: familiar patterns of implicit requests and action. AI detection models can be trained to identify urgency framing, escalating commitment cues, and attachment-focused action prompts. When these signals are presented together, it reveals an attack narrative that cannot be ignored. When scaled to the organizational level, we don't see idiosyncratic cons — we can see structured campaigns.

As long as attacks hide in familiar schema, social engineers will succeed. Their attack is carried along with workflows, watching the race unfold. They don't need to intervene. If they have done their job, the racer follows the course to completion.

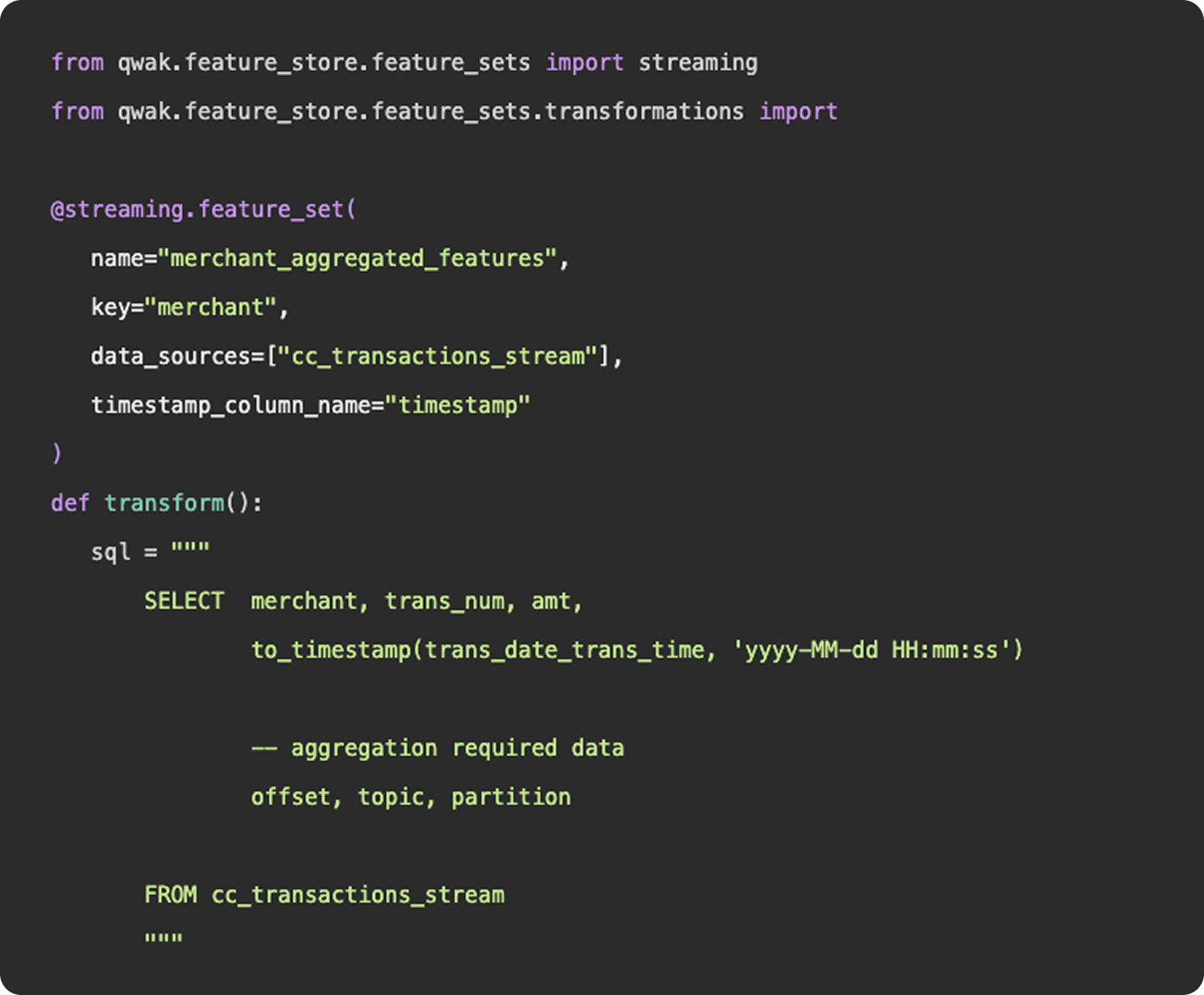

What is feature engineering

In practice, feature engineering is both science and a bit of witchcraft. It often involves both iteration and experimentation to uncover hidden patterns and relationships within the data. For instance, a data scientist might transform raw sales data into features such as average purchase value, purchase frequency, or customer lifetime value, which can significantly boost the performance of a churn prediction model. By thoughtfully engineering features, practitioners can provide machine learning models with the most informative inputs, ultimately leading to better accuracy and more robust predictions.

What’s more?

- Incorporate more and more data sources

- Feature engineering platform

What is data engineering

As we mentioned above, feature engineering is certainly a subset of data engineering. It involves the ingestion of data from a source, applying a series of transformations, and making the final result available to be queried by a model for training purposes. You can construct feature engineering pipelines to resemble data engineering pipelines, having schedules, specific source and sink destinations, and availability for querying. However, this configuration would only really apply once you have surpassed the experimentation stage and determined a need for a consistent flow of new feature data.

What is feature engineering

1. Functions

Functionally, there is nothing to differentiate data vs features - data points (link). Where feature engineering and data engineering really differ is in the objectives and motivations for constructing the pipelines. In general, data engineering serves a broader, more unified purpose than feature engineering. Data engineering platforms are constructed to be flexible and universal, ingesting various types and sources of data into a unified storage location where any number of transformations and use cases can be applied. The intent of a well constructed fact table or gold layer in a data lake is to provide a single source of truth that answers many different questions, produces many reports, and can be consumed by many downstream customers.

2. Practise

And in practice, an organization’s data engineering team will be responsible for the curation and maintenance of all data pipelines, not just those that relate to machine learning. These pipelines may power BI dashboards used by C-Suite, auditing reports that feed payroll, or event logs that show a user’s history of actions within the application.

Feature engineering, on the other hand, serves a specific purpose, finding the tailored inputs and columns that will generate the best predictive results for a machine learning model. Data scientists and machine learning engineers are not tasked with developing a universal data model that will ingest all data points throughout an organization, they just need to select, curate, and clean the data needed to power their models.

3. Machine learning

Now, as machine learning teams grow and begin to incorporate more and more data sources into their models, their feature engineering platform may start to resemble a larger data engineering platform in the tools and methodologies they employ. But, the intent is not to establish flexible data models that can be used throughout the organization - it is simply to power their machine learning models.