Engineered Trust: Detecting Pretexting Attacks that Play the Long Game

Many breaches don't start with urgency, they start with a causal conversation.

Long-con social engineering campaigns are designed to feel like legitimate work, using time, repetition, and small commitments to turn skepticism into compliance. This piece examines the psychological mechanics behind these pretexting attacks and why traditional defenses fail once trust has already been operationalized.

Most discussions of social engineering are built on “quick and easy tricks” pretexts that use urgency and immediacy. This model is incomplete. The more dangerous attack are not urgent, they are patient. The long-con is simple: find a victim, build their trust over time, then make the request when resistance is lowest.

Unlike romance scams, which are based on developing intimate relationships, long cons typically focus on building professional credibility within ordinary business contexts. Like the Axios attack, they are multi-stage campaigns that can take weeks or months to develop. The attacker doesn’t need your friendship or respect. They need you to believe they are a legitimate actor.

In September 2025, U.S.-based CISO Walter Williams documented a three-month campaign that had targeted him via LinkedIn under the pretext of a senior leadership role at Gemini Crypto. Contact moved from LinkedIn to SMS to WhatsApp, included a deepfaked video interview and an e-signed contract. This contact was sustained despite the target being suspicious from the outset. He published his account because he recognized how effective the playbook would have been against someone less alert.

In the telling, many such accounts are reduced to ‘rapport’ building. This reflects how people experience these attacks, but it obscures the underlying social processes that build and sustain trust. The ‘art’ of social engineering requires the careful coordination of social cues in the moment. To stop these attacks, we need to understand how cognitive sediment accumulates - how repeated interactions create rapport and a feeling of legitimacy.

How Sunk Cost Bias Makes Workers Vulnerable to Social Engineering

Certain features of the CISO’s account suggest a sunk cost fallacy: because we have invested time and resources in a person or activity, we continue to do so in hopes that we will get a return. He notes:

“They were investing a lot of time into this — three months of constant messages — and had some interesting techniques — the e-signed contract [tied to a Gmail address],”

But sunk costs are just the beginning. An exclusive focus on the cold cognitive side fails to address what we are investing in: a relationship. The experience of ‘rapport’ is more concrete than investment, it is the development of an interpersonal, professional relationship. As we apply for a job or negotiate a contract over days, weeks, and months, we imagine ourselves in a future partnership - one that becomes more real as discussions proceed. This is what the attack is engineering.

The Reciprocity Trap: How Attackers Create a Need to Respond

Reciprocity is a primary moral driver. Human and nonhuman animals demonstrate it in the wild. This social impulse works without much thought or control, creating a sense of obligation and an imperative to return favors.

In the job-seeking CISO’s case, he had contacted the company through legitimate channels previously to apply for a job, receiving only an automatic response. When contact arrived months later, he engaged despite his suspicions:

“the reason I gave them even a moment of my time - as the initial contact was odd enough to signal something was wrong - was because I had reached out to them [previously] and they might be following up with me,”

In this case, he expected them to reciprocate with contact and was looking to build a more substantive relationship.

In the pig butchering playbook, reciprocity is engineered more deliberately. Targets are given small early investment returns before any significant commitment is requested. Professional culture creates similar conditions: a recruiter who invests time in you, an overseas counterpart who follows up consistently, or a platform that appears to deliver results — each creates feelings of interpersonal imbalance that the next request is designed to resolve.

The Compliance Cascade: Social Engineering One Yes at a Time

The foot-in-the-door technique is the well-documented tendency for small initial commitments to lower resistance to larger subsequent ones. It works because we want to remain consistent and don’t notice that we’re drifting toward an adversary’s goal. Each small agreement shifts our internal point of reference. When we agree once, each subsequent request feels harder to refuse.

Having moved a conversation to WhatsApp, we are already slightly more committed than we were. Having attended one video call, the next seems like a natural continuation. At Arup, that sequence ended in an 8-figure fraudulent wire transfer.

Typically described as a deepfake fraud, the Arup incident in early 2024 involved a finance employee who authorized a $25.6 million wire transfer after a video call with what appeared to be the company CFO and several senior colleagues. This description is accurate but incomplete. The employee had initially suspected the invitation was a phishing attempt. What changed their assessment was the social architecture of the call: multiple participants, a familiar professional context, a request that fit the established pattern of their working relationship with leadership. The employee was not making an isolated decision. They were making the next decision in a sequence they had already partly endorsed.

Attackers planned this. The pig butchering playbook permits small early investment withdrawals specifically to establish that the platform is legitimate. The first yes is cheap. It funds all the ones that follow.

Familiarity: Accumulating Trust Dividends

The sense of familiarity is an unreliable social cue. People can recognize a face in less than a second even when they have seen them only briefly. They nevertheless fail to recognize where they know the person from. The loose coupling of person and context creates a cognitive vulnerability that can be exploited.

We prefer something the more we see it. Psychologists refer to this as the mere exposure effect: repeated contact builds trust, independent of whether that trust has been earned. When the job-seeking CISO noted that his attackers “were investing time” into him, they were building this sense of familiarity. This doesn’t require liking - this is a business relationship. The key is repetition: the daily message, the check-in, the follow-up are not relationship maintenance. Each interaction adds another layer of cognitive sediment, reinforcing recognition without verification.

Like product placement with a brand, we begin to trust what we’ve seen before. When people are provided with names of unknown people, they can later mistake them for celebrities. In social engineering, this plays out at the organizational level. Use a recognizable name, and you unlock the pathway to credibility.

Employees don’t enter these situations looking to second-guess a co-worker, supervisor, or a prospective employer. They are looking for reason to trust them. Their attention is elsewhere, responding to questions to get a job, hit their KPIs, or go to lunch. A sense of familiarity short-circuits the scrutiny we would normally apply to any situation. But we rely on our instincts at our peril.

From Social Manipulation to Security Response

Simple pretexts are cheap, but familiar. Even when service and help desk agents are busy, these tactics can be recognizable, triggering deliberation and the rejection of a request. Phishing emails are all too familiar and Nigerian Princes and investment opportunities are too common. Controls designed for urgency and authority fail against campaigns that persist over weeks and months.

When a relationship crosses channels (e.g. LinkedIn to WhatsApp, from an email to a call), persists over time, and introduces escalating commitments, treat it as the start of a security event rather than a business interaction.

When the pay-offs for compromising a help desk are in the millions, the long-con is worth the investment. With AI, the investment has become cheaper, with each new day introducing new improvements in the speed, accuracy, and availability of models.

But it’s not the AI that makes it the con work. Long-cons are successful because they simulate a real relationship. At some point, we cross a threshold where familiarity, reciprocity, and small requests go unnoticed. The cognitive sediment has tipped the scales against us. If sediment accumulates, we need to periodically dredge these unexamined relationships: who made first contact, are we speaking with the same party, what kinds of requests are they making, what access do they have? Threats thrive in the spaces between unquestioned assumptions and implicit answers.

Successful defenses require moving past cognitive biases. This is no trivial matter. Training is the default response - an organizational reflex - but awareness of biases does not reliably interrupt it in practice. Introduce friction strategically in business processes at key stages such as verification and authentication, train employees to slow down, increase scrutiny on larger requests.

These recommendations fail because they ignore employees’ cognitive load. Whatever knowledge is learned can quickly be buried by the needs of the moment. Reminders and alerts can be overwhelming, and employees will become desensitized.

We must accept that employee workload is a critical constraint. Cognitive bandwidth that is already strained cannot be extended with more scrutiny. For many organizations such as SMEs, managing workload to create more bandwidth isn’t possible. Financial and human resources might be stretched to their limits - and the staffing levels necessary to maintain employee awareness simply aren’t available.

Structural Defenses for a Structural Problem

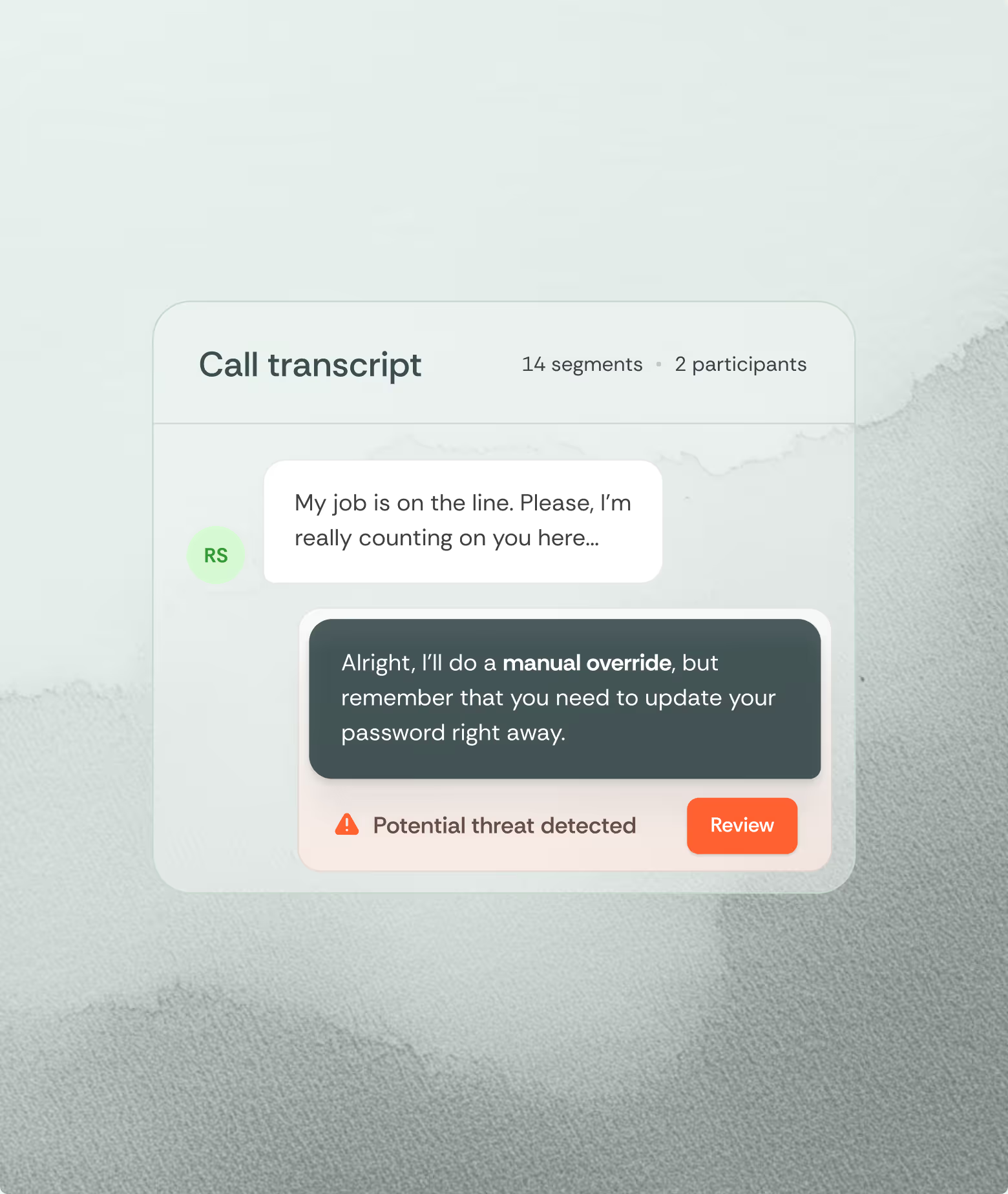

When human resources are not adequate, identify an appropriate AI tool. First, assess whether your organization has the necessary tools to analyze communication channels to identify social engineering. Not all tools are equally well suited: what works for email, will not work for calls. Consider conversational AI that can analyze exchanges and detect social cues of deception, persuasion, and override directives. It can track the course of the conversation and provide alerts at critical decision points of the conversation, nudging agents to revisit a request that fails to follow security protocols.

Unlike people who might miss or forget a crucial signal in a long exchange or invisible campaign, fine-tuned AI can focus on the signs of pretext big or small. It can follow the course of the conversation, weigh the evidence, and make the opaque patterns of human communication visible.

However, AI is only as useful as the security practices it uses. Systems must be integrated into workflows in a responsible manner by cybersecurity professionals. Understanding the capabilities of each system, how you integrate these signals, and any gaps that remain is key.

There is no substitute for good security practices, but we can help the human security culture by examining the foundation of trust. The sediment will continue to accumulate. The question is whether your organization has the capacity to dredge up the habits, roles, and tools before adversaries exploit them.

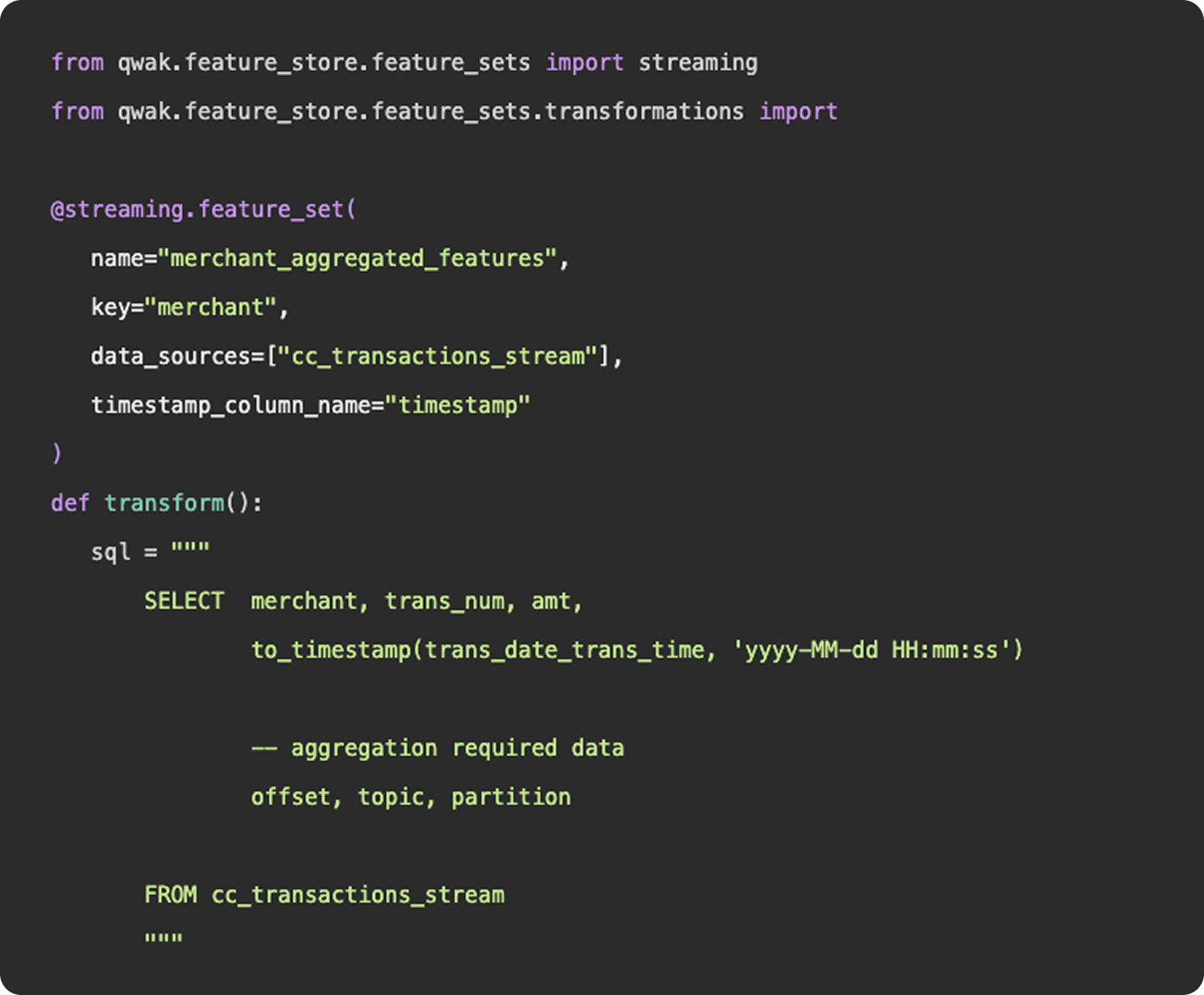

What is feature engineering

In practice, feature engineering is both science and a bit of witchcraft. It often involves both iteration and experimentation to uncover hidden patterns and relationships within the data. For instance, a data scientist might transform raw sales data into features such as average purchase value, purchase frequency, or customer lifetime value, which can significantly boost the performance of a churn prediction model. By thoughtfully engineering features, practitioners can provide machine learning models with the most informative inputs, ultimately leading to better accuracy and more robust predictions.

What’s more?

- Incorporate more and more data sources

- Feature engineering platform

What is data engineering

As we mentioned above, feature engineering is certainly a subset of data engineering. It involves the ingestion of data from a source, applying a series of transformations, and making the final result available to be queried by a model for training purposes. You can construct feature engineering pipelines to resemble data engineering pipelines, having schedules, specific source and sink destinations, and availability for querying. However, this configuration would only really apply once you have surpassed the experimentation stage and determined a need for a consistent flow of new feature data.

What is feature engineering

1. Functions

Functionally, there is nothing to differentiate data vs features - data points (link). Where feature engineering and data engineering really differ is in the objectives and motivations for constructing the pipelines. In general, data engineering serves a broader, more unified purpose than feature engineering. Data engineering platforms are constructed to be flexible and universal, ingesting various types and sources of data into a unified storage location where any number of transformations and use cases can be applied. The intent of a well constructed fact table or gold layer in a data lake is to provide a single source of truth that answers many different questions, produces many reports, and can be consumed by many downstream customers.

2. Practise

And in practice, an organization’s data engineering team will be responsible for the curation and maintenance of all data pipelines, not just those that relate to machine learning. These pipelines may power BI dashboards used by C-Suite, auditing reports that feed payroll, or event logs that show a user’s history of actions within the application.

Feature engineering, on the other hand, serves a specific purpose, finding the tailored inputs and columns that will generate the best predictive results for a machine learning model. Data scientists and machine learning engineers are not tasked with developing a universal data model that will ingest all data points throughout an organization, they just need to select, curate, and clean the data needed to power their models.

3. Machine learning

Now, as machine learning teams grow and begin to incorporate more and more data sources into their models, their feature engineering platform may start to resemble a larger data engineering platform in the tools and methodologies they employ. But, the intent is not to establish flexible data models that can be used throughout the organization - it is simply to power their machine learning models.