Awareness Without Agency: Why Security Culture Needs Empowered People

Awareness Without Agency: Why Security Culture Needs Empowered People

Most organizations measure security awareness. Far fewer try to build it. The gap isn't a training problem — it's a culture problem. This piece examines why, and what organizations must build in its place.

The topic of this RSAC session that I walked into was training efficacy metrics — how organizations measure security awareness programs and advocate to leadership. But the conversation kept drifting despite the facilitator's best efforts, an undertone surfacing. Many practitioners were skeptical, describing their experiences with a quiet exhaustion. These were people with the weight of the world (or at least one company) on their shoulders.

A few spoke up, telling each other to draw a line in the sand, to take a stand against unrealistic goals and requests from management. But it was clear that many didn't feel that they had the time, tools, or temerity to challenge a system. They needed a job. They had deadlines. They were under-resourced. And the alerts kept coming.

Everyone came to that room and to their jobs wanting to do good work. Rather than being given protected time to think, strategize, and respond, the compliance checkboxes kept accumulating. They inherited a training model. At one point, that model was good enough: organizations were small, threats were comparatively simple. But somewhere along the way, someone stopped updating it. They follow industry standards that quietly fossilized.

Outside that session, RSA was filled with energy. People talked a lot about agentic systems. If the agents have the right tools, they can do incredible things. That's the pitch. And increasingly, they are: as defenders and forming a new, untested attack surface.

But before we start giving artificial agents tools, we need to ask a harder question: do our human employees have the tools they need to work alongside them? When systems fail, are misused, or are actively manipulated, our people will need to respond.

A Culture of Disempowerment

The culture of disempowerment is built one layer at a time, like sediment falling passively to the bottom of an ocean floor as the real activity occurs above the surface. This is security training. Time and pressure help form the layers, but the result is not truly designed. It's reactive. Update for the latest attack. Check box. Cover basic compliance features. Check box. Knowledge test. Check box.

The failure of this model is no longer just a practitioner complaint — the U.S. Army recently eliminated its annual cybersecurity training requirement after finding no measurable improvement in security outcomes in compliance training.

When time and effort go into training design, the bad reputation of training can undermine its quality: Fast-forward the video, repeat next year. Multiply this across the human layer of your organization. Now you have a problem.

This is the training machine, a contraption developed by the old gods of cybersecurity, inherited by practitioners who know it's broken but can't stop it. Most assume that formal training is the only solution to stop social engineering and improve cyber hygiene.

The disempowerment I observed at RSA is not unique. I've seen it in students, government workers, and in healthcare environments. People often feel that they are subject to a system — and not truly a part of it. They didn't design policies or training programs. They didn't select metrics. They don’t have a chance to share their knowledge. Yet they must absorb the accountability for outcomes they were never given the resources to fix.

This is the architecture of learned helplessness that defines many organizations.

From Awareness to Agency

To change from a culture of learned helplessness to one of agency, we need to empower our employees. We need to ask what our employees want and need. We need to listen to what they understand and know.

Decades of self-efficacy research make this clear: people change their behavior when they are motivated and experience genuine efficacy, not just awareness of them alone. They have the tools, the feedback, and can see the connection between their action and outcomes.

We can see these shifts in security awareness maturity in five phases:

Phase 0. Inattentional Blindness.

Some organizations are not aware that cultural problems exist. They might only have idiosyncratic metrics such as training completion rates or time to resolution for help desk tickets but might not have accessible phishing click rates or understand the kinds of security policy violations that occur. These organizations don't understand their employees' experience of security culture, nor whether they feel equipped to act. Their attention is elsewhere, focused on KPIs or concrete technical threats rather than the cognitive, behavioral, or social dimensions of security risk.

Cultural inertia carries them forward until a breach, an audit, or a room full of exhausted practitioners forces the question. If organizations follow the same path, they will achieve the same result: breaches will occur and social engineering will succeed.

Phase 1. Problem Finding: Implicit Awareness.

Practitioners begin to sense that a definable problem exists. This takes time, as organizational environments are often wicked — providing inconsistent or incomplete feedback. Available compliance and incident metrics do not capture these emerging concerns.

Without a systematic framework, this information cannot be gathered and rigorously assessed. Practitioners can't fully understand the problem and can't identify effective interventions. This is the stage of security instincts without theory. This phase is often associated with fear and anxiety. Practitioners aren't standing still, they're buried. The volume of incidents, tickets, and competing priorities grow without being able to pause to clearly define and communicate the problem. Employees might look to their leadership for clarity and guidance, but none is provided.

When training is provided, it might not be aligned with practitioners day-to-day activities. Without relevance, employees are unlikely to take it seriously.

Phase 2. Problem Framing: Pattern Awareness.

In time, organizations pass into a different phase. Patterns become more familiar and practitioners begin to develop and incorporate formal detection and education paradigms. Training can now be targeted to address specific needs.

Many organizations stall here. A gap emerges between understanding the problem in broad strokes and the path toward specific solutions. Often the answer appears to be more and better training: if they can only hone employees’ understanding, surely that will resolve the issue. This approach gives employees knowledge without tools.

This is where learned helplessness can take hold. Practitioners are aware of the problem but not given the tools and resources to address it. When they try, they often fail.

Phase 3. Resources and Tool Availability.

Vendors and internal security architects have begun paying attention to these unmet needs. Tools are becoming available that align with specific decision failures rather than generic compliance gaps. Whether through internal development or market availability, practitioners gain access to tools that align with the problems they face. They might come from different vendors and require different kinds of skills, but a paradigm begins to stabilize — one that provides a cognitive scaffold for action.

Even when tools are available, practitioners often lack the bandwidth to test and implement them. The same overload that created the gap now prevents closing it. And it keeps growing.

The critical tension has shifted from procurement to permission. Organizations must begin to integrate these tools into their decision-making processes. Practitioners must learn when and where to trust them. This doesn’t typically follow from training. Organizations must reassign accountability when tools change how work is conducted. Without that trust, organizations cannot fully realize the potential of these tools.

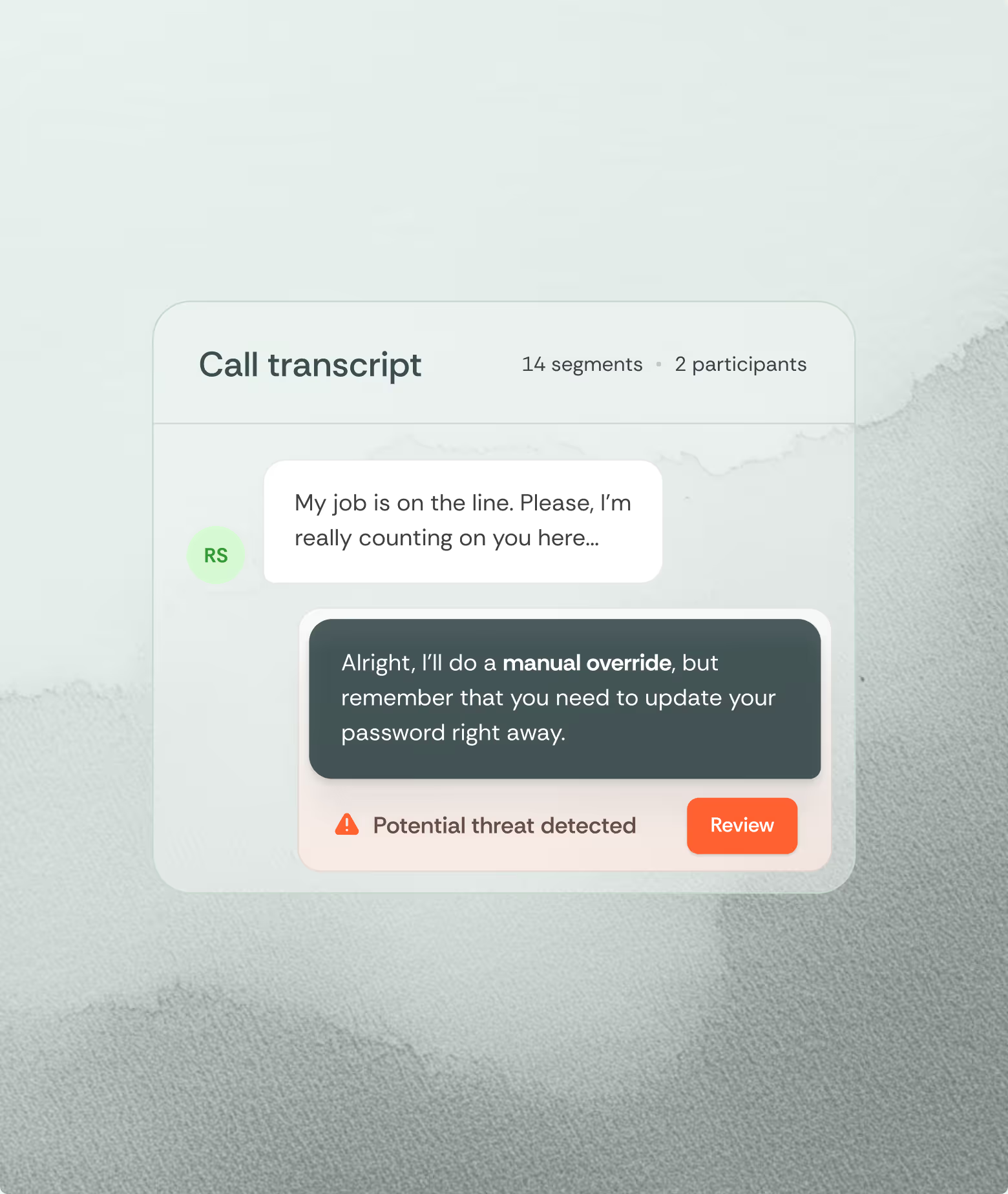

Rather than train people to recognize it after the fact, tools that can detect security policy violations and social engineering represent this shift most clearly.

Phase 4. Integration, Efficiency, and Mastery.

As training and trust in tools become normalized and integrated into essential workflows, those tools enable rapid responses. Practitioners no longer doubt what they can do — they know it. While overconfidence remains a risk, they can now begin to predict future outcomes, allocate their attention and security resources where they are most needed, and patch people, procedures, and systems that are vulnerable.

Tool mastery is defined by fluency: knowing where to go to retrieve information, knowing what responses will work. Even when practitioners do not or cannot know something themselves, they have the right tools to support their decision-making.

In this phase, security culture is fully realized: employees perform their jobs unencumbered, remain aware of potential threats, and can respond in real time. The systems that protect them are integrated into their mental workflow.

These are phases, not stages. Organizations don't graduate from one phase to the next permanently. New technologies, new threats, new leaders, and new personnel can push them back into a different phase at any time. Resilient organizations build cultures that expect disruption and adapt rather than break when confronted with it.

Help the Agents in the Room

Many vendors at RSAC were advocating for their AI agents. In many cases, that term was only loosely defined — a tension the vendors themselves acknowledged when I asked more questions.

Regardless of the definition, their agency and centrality in organizations has made them a part of both the defense and the threat landscape.

But even as these systems become increasingly important we cannot allow them to overshadow the human agents who are already in the room. They remain the ones accountable for outcomes.

The practitioners in that training session were intelligent people. Like many in the field, they understood the pain points. They were passionate, committed to their organizations and cared about outcomes. What they lacked was time, tools, and organizational permission to act. Leadership must identify these gaps and empower the people closest to the problem. We cannot have agents without agency.

Organizational leaders must ask themselves about their organization’s security awareness. This must be an honest conversation that engages help desk agents, SOC analysts, and management. Only by understanding an organization’s situational risk awareness can you begin to close the gap between policy and practice.

We must also be realistic about the limits of training and assessment. Annual sessions and behavioral nudges cannot hope to boost awareness alone. If we cannot give employees the time and resources they need, we need an alternative. We need to build smart tools that can close the gap.

Security culture does not fail because of hardware or software. It fails when organizations continue to blame their people instead of protecting them.

Interested?

Contact me.

What is feature engineering

In practice, feature engineering is both science and a bit of witchcraft. It often involves both iteration and experimentation to uncover hidden patterns and relationships within the data. For instance, a data scientist might transform raw sales data into features such as average purchase value, purchase frequency, or customer lifetime value, which can significantly boost the performance of a churn prediction model. By thoughtfully engineering features, practitioners can provide machine learning models with the most informative inputs, ultimately leading to better accuracy and more robust predictions.

What’s more?

- Incorporate more and more data sources

- Feature engineering platform

What is data engineering

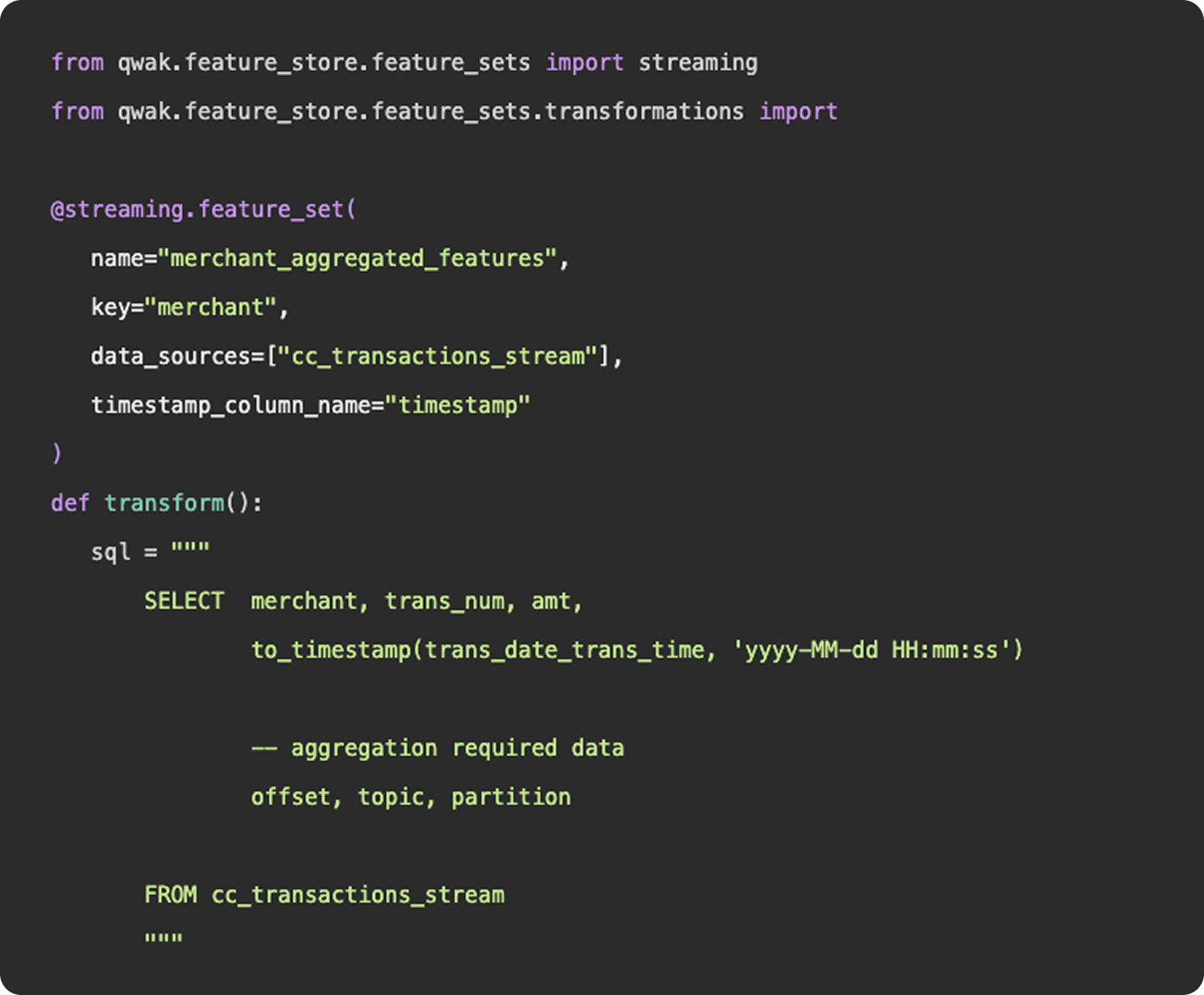

As we mentioned above, feature engineering is certainly a subset of data engineering. It involves the ingestion of data from a source, applying a series of transformations, and making the final result available to be queried by a model for training purposes. You can construct feature engineering pipelines to resemble data engineering pipelines, having schedules, specific source and sink destinations, and availability for querying. However, this configuration would only really apply once you have surpassed the experimentation stage and determined a need for a consistent flow of new feature data.

What is feature engineering

1. Functions

Functionally, there is nothing to differentiate data vs features - data points (link). Where feature engineering and data engineering really differ is in the objectives and motivations for constructing the pipelines. In general, data engineering serves a broader, more unified purpose than feature engineering. Data engineering platforms are constructed to be flexible and universal, ingesting various types and sources of data into a unified storage location where any number of transformations and use cases can be applied. The intent of a well constructed fact table or gold layer in a data lake is to provide a single source of truth that answers many different questions, produces many reports, and can be consumed by many downstream customers.

2. Practise

And in practice, an organization’s data engineering team will be responsible for the curation and maintenance of all data pipelines, not just those that relate to machine learning. These pipelines may power BI dashboards used by C-Suite, auditing reports that feed payroll, or event logs that show a user’s history of actions within the application.

Feature engineering, on the other hand, serves a specific purpose, finding the tailored inputs and columns that will generate the best predictive results for a machine learning model. Data scientists and machine learning engineers are not tasked with developing a universal data model that will ingest all data points throughout an organization, they just need to select, curate, and clean the data needed to power their models.

3. Machine learning

Now, as machine learning teams grow and begin to incorporate more and more data sources into their models, their feature engineering platform may start to resemble a larger data engineering platform in the tools and methodologies they employ. But, the intent is not to establish flexible data models that can be used throughout the organization - it is simply to power their machine learning models.